As spotted by ZDNet, GNOME's AI assistant, Newelle, is now ready for its 1.0 release. As you might imagine from a Linux project, you have a lot of control over Newelle, including not installing it at all if an AI assistant isn't something you want on your PC. If you do, you can pick and choose which LLM Newelle uses instead of making-do with a pre-set one.

Source: https://www.xda-developers.com/linuxs-gnome-based-ai-assistant-released/

It looks like it's very similar to Alpaca. I suppose it has a few extra niceties like the ability to read documents and generate commands... Mmm, I would be very careful with that.

The Question would be here: How long can I decide that? To be honest, I wouldn't be surprised when it will be implemented in the Gnome Desktop and You can't uninstall it.

Not only generate Commands. Running Commands. But to be fair: To be able to do it, You have to use Flatseal to give it Permissions that it can do it.

That's what I meant, sorry... but I wouldn't mind to have the ability to see the command first before I agree to it.

and here (post #7 the lucky number) finally the real deal:

Be great to have an AI that could perform all the manual stuff around a PC. Saw a PC with an array of built-in mics and twin speakers - the theory being that you just tell the AI what to do and it will say 'yes.' Capable of running a smaller LLM locally, but not really high-powered enough to run a large one.

Model Size Recommended VRAM

Small LLMs 7B - 8 GB

Medium 13B - 12 GB

Large 30B - 24 GB

(On top of that, need a kernel at least 6.15 plus the firmware)

I can just imagine.

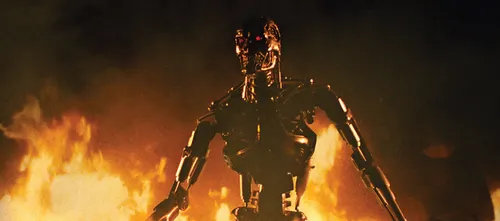

A.I.: "I'm sorry, 'open a terminal' is not in my vocabulary, but I can launch the latest Terminator. It's name? rm -rf" ![]()

Now there are reports of Ubuntu which plans to use "some" AI in the near future as well.

One exciting example of this is bringing first-class speech-to-text and text-to-speech to Ubuntu. I don’t see these as “AI features”, I see them as critical accessibility features that can be dramatically improved through the adoption of LLMs with minimal (if any) drawbacks.

Here the original post followed by discussion:

Here is a thread about this topic:

I wonder how "local" it will be. Because of the lack of computing power my mostly used thin clients have.

If not highly optimized models will arrive that don't consume a lot of resources, I wonder where the computing power will come from.

It will come from all that RAM and SSD's that you can no longer buy.

I can see a trend whereby a law will be passed to state that eventually to save on dwindling Earth resources you will no longer be able to use a computer but a thin client, only to be used as a Kiosk ala 1984 mode.